Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Libraries like Ultralytics had a great impact on deep learning, specifically object detection. Even if someone doesn’t have any knowledge about computer vision, they can train and deploy an object detection model by combining a few things. First, find a dataset from Kaggle, create a notebook, activate the GPU, and then use Ultralytics for training. With nearly 50 lines of code and 4 to 5 hours, anybody can train a YOLO model and deploy it. Yes, this will work for general object detection. If the dataset is clean and big enough, there will be a model that is ready to use for most cases. You can accurately detect common objects like cars, people most of the time. But is this the same for every task?

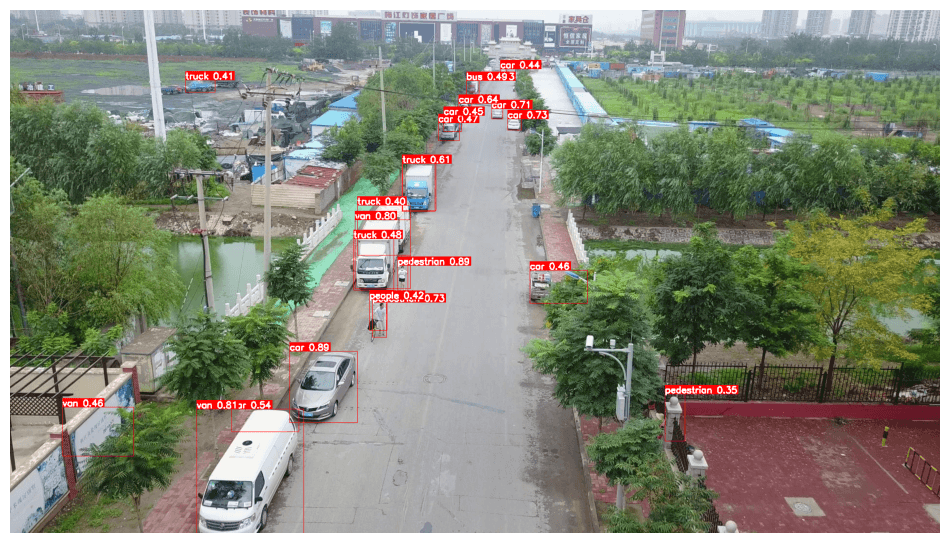

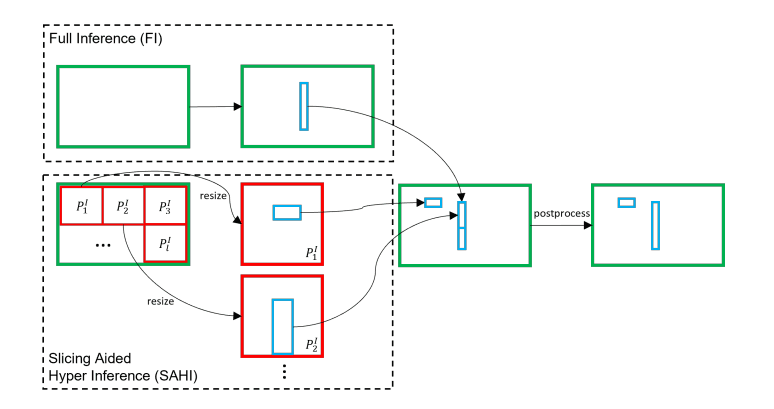

Of course, no, let’s think about small objects. Objects are too small, and it is even impossible to recognize them accurately with our eyes. So this simple approach will not work, and there are different solutions for detection of small objects, and in this article, I will talk about SAHI. The main idea behind SAHI is slicing the image into smaller patches and performing inference and training on these smaller patches with further processing.

Consider small objects in images, like planes, insects, and people in the distance. Even in the original image, they are hard to recognize. Now think about the model training part, because of memory restrictions and training time, in most cases, images are resized to resolutions like 640×640, 640×480.

Even by default, Ultralytics resizes the original images to 640×640. Image size affects training time significantly, and sometimes, with low GPU VRAM (4GB, 6GB, 8GB), CUDA out-of-memory error is common. You can solve this by reducing the batch size or the image resolution.

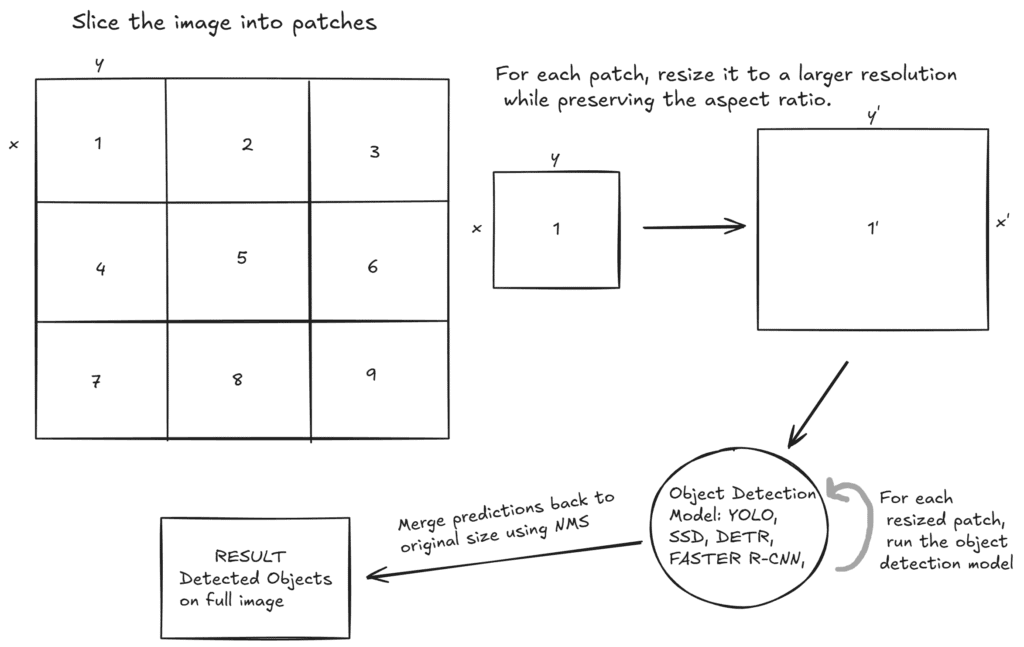

Objects were small even in original resolution, and think about what happens after resizing before training? They became super-small and sometimes disappear. And here SAHI comes to play. The idea is simple yet effective: If we slice the image into smaller patches and process each part one by one, we don’t have to resize full images to lower resolutions. After slicing the image into patches, for each patch, resize the patches to the higher resolutions while preserving the ratio, so that smaller patches become bigger(meanwhile, objects become bigger), and this idea has an outstanding effect on small object detection.

If you have time, I strongly recommend that you read the paper of SAHI. It is not that long(4 pages), and they explained it perfectly. You can read it from here.

Also, I have a YouTube video about this article, you can watch it.

You can use SAHI with any object detection model, because it doesn’t change anything from the model architecture. The idea is to slice images into smaller patches; therefore, the model architecture stays the same. You can combine this with YOLO, Faster R-CNN, SSD, DETR, so any object detection model that comes to your mind.

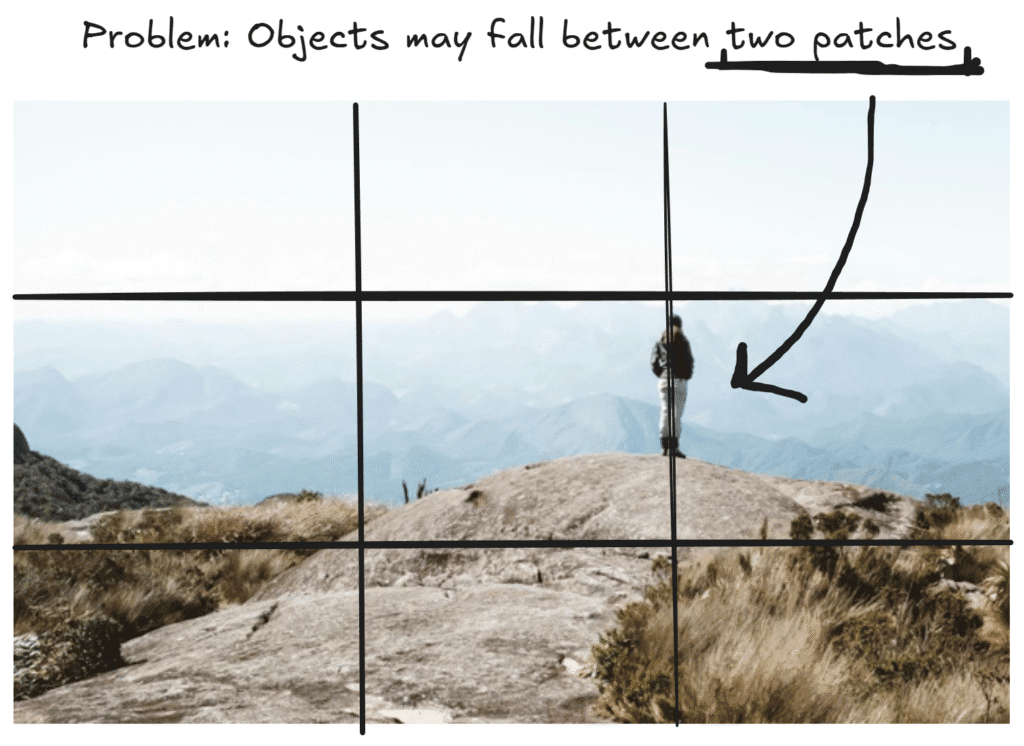

As I said before, the image is sliced into smaller patches, and each of them is processed separately. But there is one problem: what if an object is between two patches? Solving this issue, each patch is overlapped with the adjacent patch by %25 percent. And in the end, for eliminating duplicate objects detected from adjacent patches, NMS (Non-Maximum Suppression) is applied. By the way 25% is the default parameter; you can change it.

There are 2 different proposed approaches in paper:

Till now, I have already given you the main idea behind the first approach, but there is more. Okay, we have an image, and we slice it into smaller patches. The important thing is to resize these smaller patches to higher resolutions. By doing that, our small patch becomes bigger, and the object inside it becomes bigger. Then, the object detection model runs on these newly generated higher-resolution patches. For small objects, this approach makes sense, especially for small objects in high-resolution images.

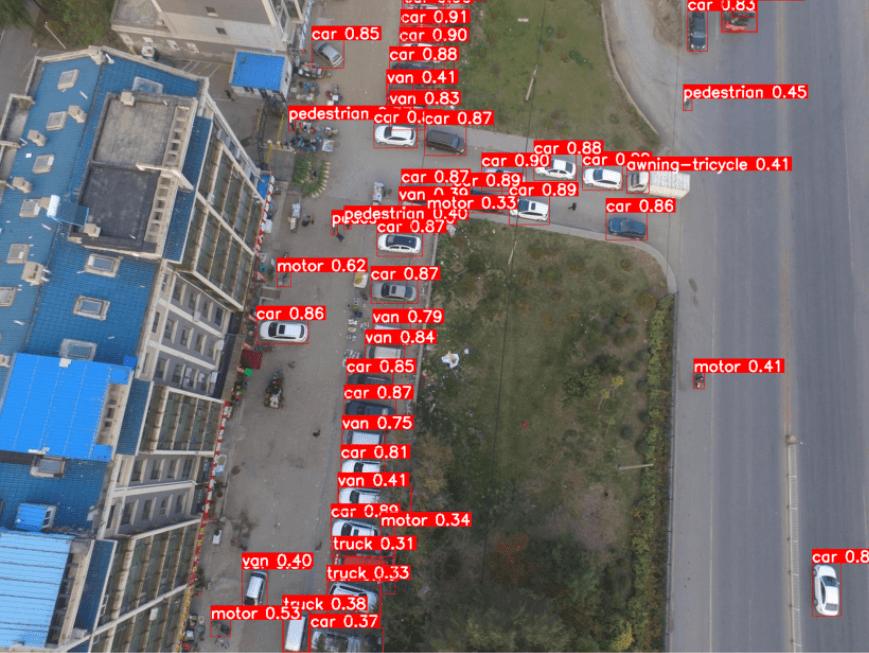

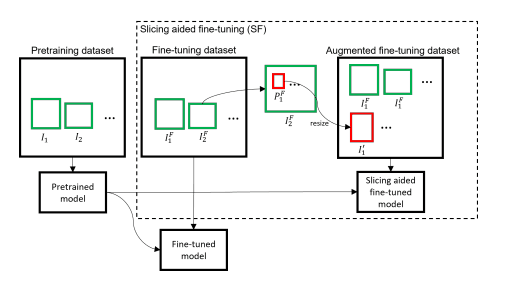

What about the ‘Slicing Aided Fine-tuning‘ approach? As the name suggests, it is about training. Before training, slice images into smaller patches, resize these patches to a larger resolution, and then train an object detection model on these newly created larger resolution patches and on the full image. You can approach this intuitively because it makes a lot of sense. You have a super-small object in a high-resolution image. First, you divide this image into smaller patches, then resize these smaller patches to higher resolutions. By doing that, your super-small object becomes larger, and then you train an object detection model on these patches, so accuracy increases for small object detection.

Now, I will show you the demos of the two approaches suggested in the paper. First, I will show you how you can combine YOLO models with SAHI to improve small object detection inference. Next, I will show how to perform Slicing Aided Fine-tuning on your dataset to train more robust models for small object detection.

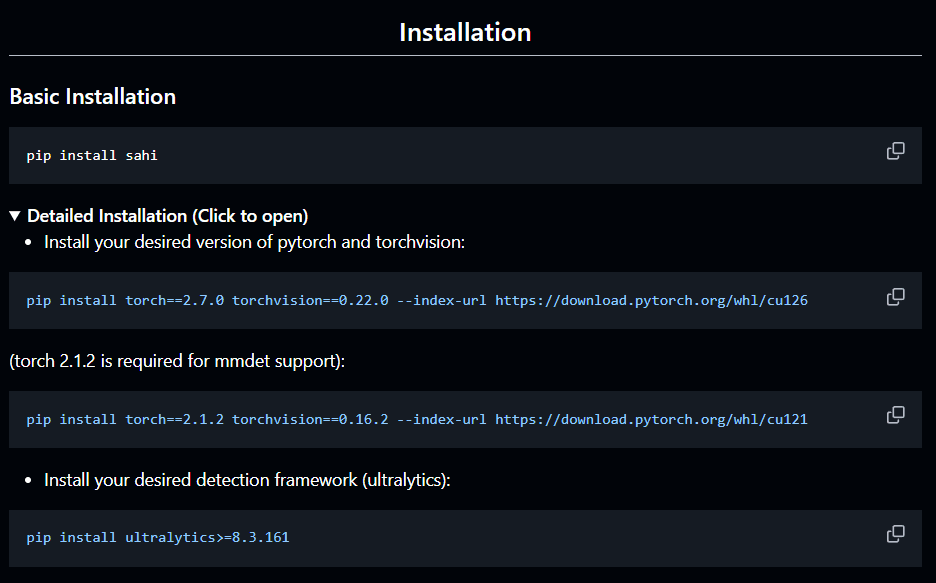

Installation is not that complex; you need a few libraries. You should create a GPU-supported PyTorch environment for training and faster inference. I already have an article about it; you can read it.

pip install sahi

Okay, lets start with slicing aided inference. I talked about a lot of things, like resizing images to lower and higher resolutions, overlapping regions. We dont have to deal with any of these, because SAHI is user-friendly just like Ultralytics.

First, create an instance of UltralyticsDetectionModel class to load your YOLO model, then use get_sliced_prediction function for inference. I used pretrained YOLO model (yolov8n.pt). If you want to use SAHI with a custom model or any other pretrained model, nothing changes.

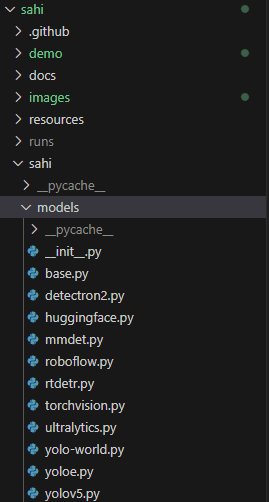

UltralyticsDetectionModel (models/ultralytics.py) inherits from DetectionModel (models/base.py) class. I strongly recommend you to check the source code for better understanding.

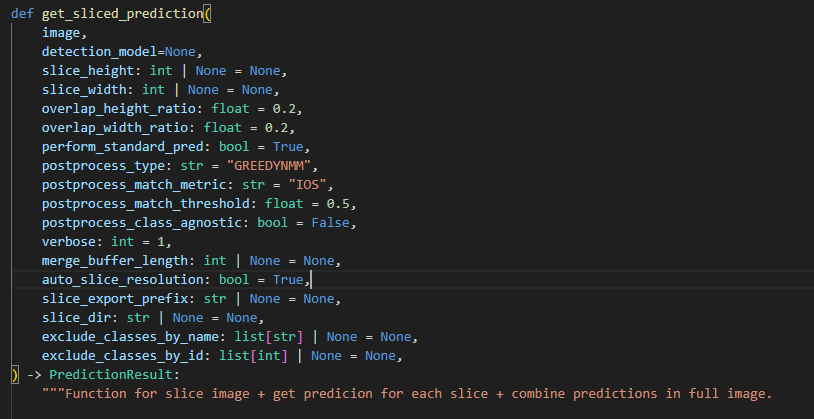

You can find all the necessary information for get_sliced_prediction function inside predict.py file

get_sliced_prediction function inside predict.py fileNow lets do inference with SAHI. You can change model_path, device, and path to the test image.

from sahi.models.ultralytics import UltralyticsDetectionModel

from sahi.predict import get_sliced_prediction

# Load the model

detection_model = UltralyticsDetectionModel(

model_path="yolov8n.pt",

confidence_threshold=0.3,

device="cuda"

)

# Run inference

result = get_sliced_prediction(

r"images/cars.jpg",

detection_model,

slice_height=256,

slice_width=256,

overlap_height_ratio=0.2,

overlap_width_ratio=0.2

)

For visualization of the results, you can loop through the result variable to get the bounding box coordinates, label information, and confidence values, and then draw bounding boxes and write label information on the objects. Alternatively, you can directly use the export_visuals function; it will save the output image to the export_dir path.

for pred in result.object_prediction_list:

# bounding box information

bbox = pred.bbox.to_xyxy()

x1, y1, x2, y2 = map(int, bbox)

# class name

label = pred.category.name

# score

score = pred.score.value

# export_visuals function for visulization

result.export_visuals(export_dir="runs/predictions/")

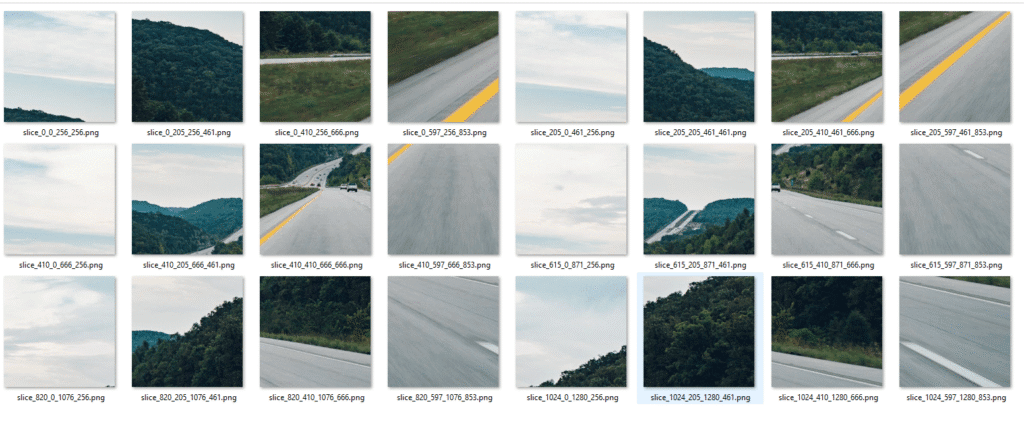

There is another cool function named slice_image. You can save all the patches using these function to visualize and understand SAHI better. Let me show you a quick demo.

# Visualize SAHI Slicing Process

from sahi.slicing import slice_image

# Slice the image and save slices to output directory

slice_image_result = slice_image(

image="test_image.jpg",

output_file_name="slice",

output_dir="runs/sliced_images/",

slice_height=256,

slice_width=256,

overlap_height_ratio=0.2,

overlap_width_ratio=0.2,)

# Print detailed information

print(f"Original Image Size: {slice_image_result.original_image_height}x{slice_image_result.original_image_width} (HxW)")

print(f"Slice Size: {slice_width}x{slice_height} pixels")

print(f"Total Slices Created: {len(slice_image_result.images)}")

print(f"Slices Saved To: {slice_image_result.image_dir}")

output_dir directoryNow it is time for the second part. The idea is close to the first one; the main difference is that this step is done before the training stage rather than at inference time. Let’s say we have a dataset that contains 1,000 high resolution images. First, slice the images into patches just like in the first approach, and then upscale them. Train the object detection model on these upscaled patches. Depending on the slicing parameters and image size, you will have around 10,000 to 50,000 training images. This approach will work when you have a high-resolution dataset. Consider that your dataset contains low-resolution images, like 640×640. So, slicing doesn’t make any sense; the image resolutions are already low. So, it is better to fine-tune if your images are high resolution like 1920×1080, 2160×1440 …

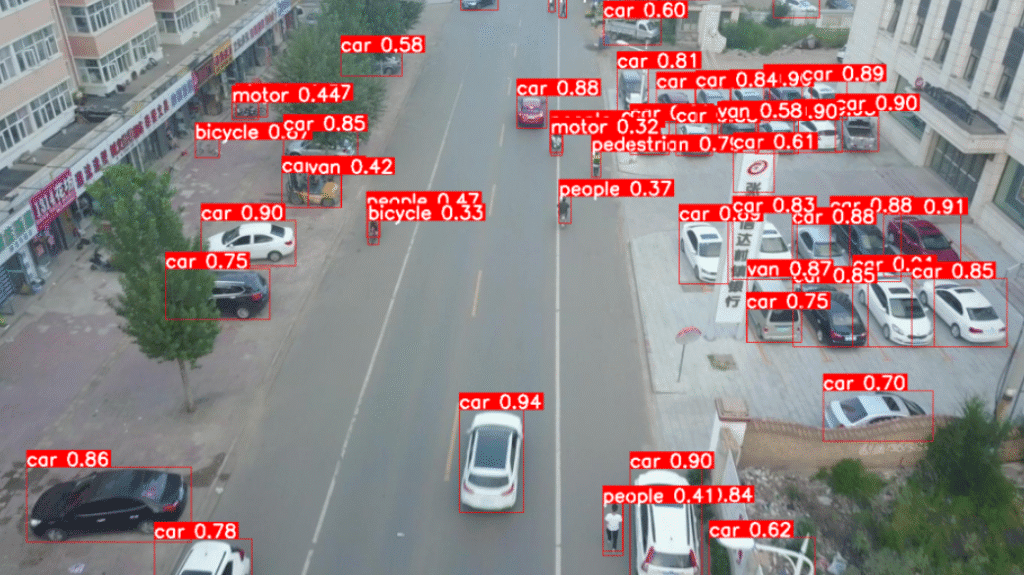

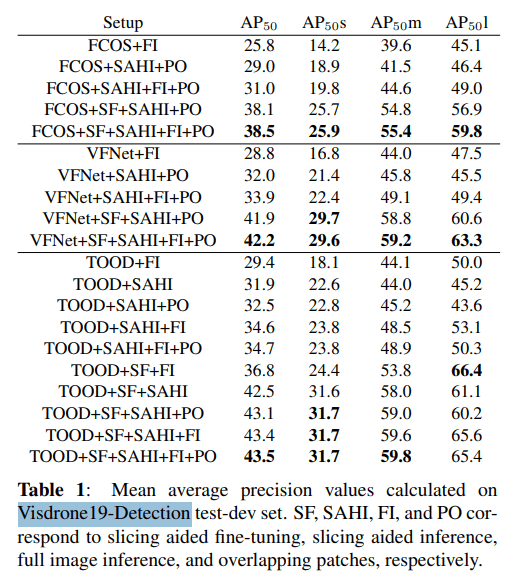

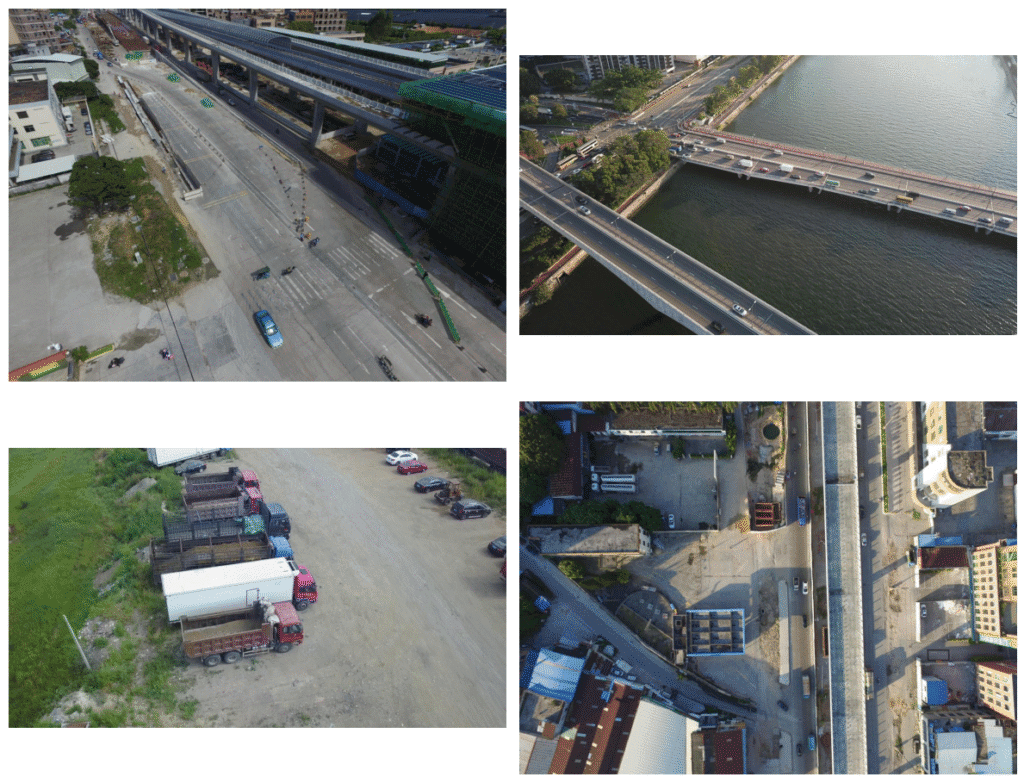

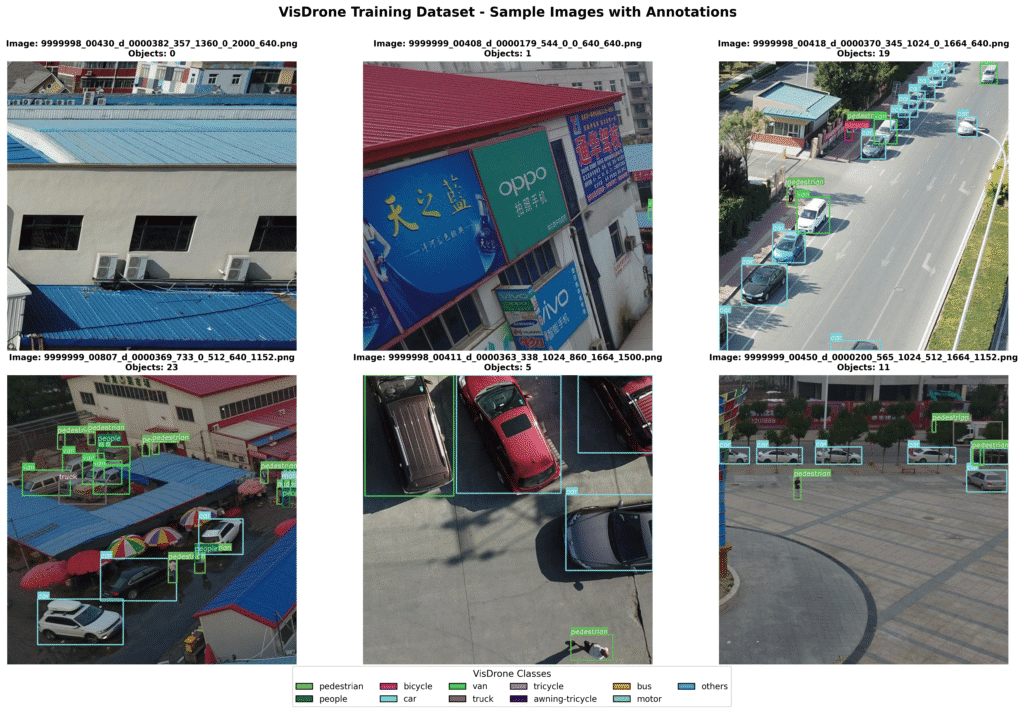

I will use the VisDrone19-Detection Dataset for fine-tuning, as benchmarks in the paper are performed on this dataset. The VisDrone19-Detection dataset contains high-resolution images captured by drones.

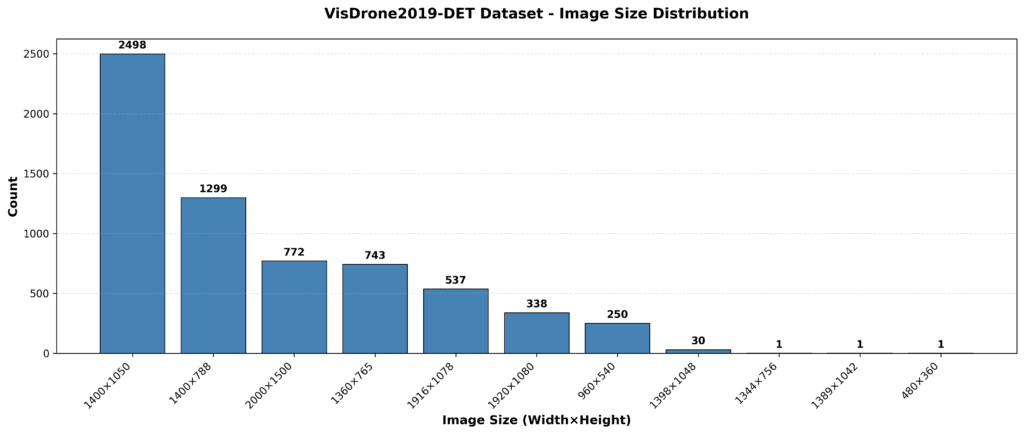

The dataset contains different resolutions, such as 1400×1050, 1920×1080, and 2000×1500 high-resolution images. I decided to use only the 2000×1500 images because slicing them increases the number of images significantly, and my GTX 1660 Ti Max-Q GPU will probably burn out after two days of training :/

You can see the image resolution distribution of the VisDrone19-Detection dataset in the chart below.

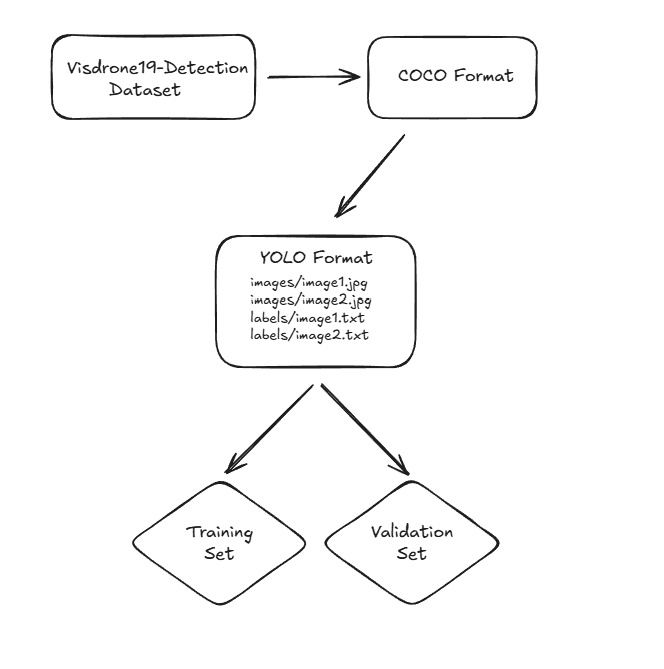

The format of the VisDrone19-Detection dataset is not in COCO format. If you don’t want to spend time converting your dataset to COCO format, I recommend that you directly choose a dataset that is already in COCO format. I processed the VisDrone19-Detection dataset and exported it to COCO format. I am not going to share the code here because it is irrelevant.

In SAHI, there is a function called slice_coco. This function takes a dataset that is in COCO format, slices the images, and then creates a dataset in COCO format.

You can change coco_annotation_path, image_dir, output_dir variables.

# Slice the COCO dataset using SAHI

from sahi.slicing import slice_coco

# Slice the COCO dataset

coco_dict, coco_path = slice_coco(

coco_annotation_file_path=coco_annotation_path,

image_dir=IMAGE_DIR,

output_coco_annotation_file_name="visdrone_train_sliced.json",

output_dir=SLICED_OUTPUT_DIR,

ignore_negative_samples=False, # Keep images without annotations

slice_height=640,

slice_width=640,

overlap_height_ratio=0.2, # 20% overlap

overlap_width_ratio=0.2, # 20% overlap

min_area_ratio=0.1, # Ignore objects smaller than 10% of slice area

verbose=True

)

Output is one folder that contains all images, and one annotation file in JSON format. You can see the annotation file inside output_coco_annotation_file_name directory, and the sliced images under output_dir directory.

After you have a dataset in COCO format, everything becomes quite easy. COCO format is like print("hello world") in deep learning. You can export different dataset formats from it with various tools, but I don’t think it is necessary. You can directly create a simple pipeline to export from COCO format to any other format.

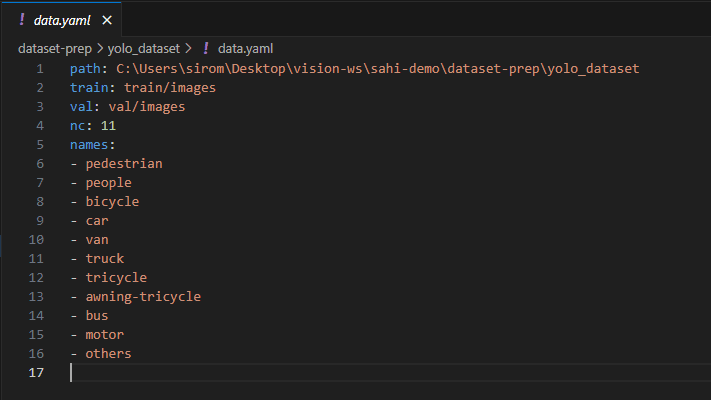

I am going to train a YOLO model; therefore, I converted the dataset to YOLO format.

(VisDrone19-Detection dataset → COCO format → YOLO format)

You can split your dataset into training and validation sets at any ratio you want. You can see a few examples from the sliced dataset created by the slice_coco function.

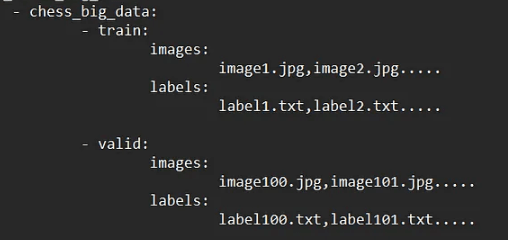

Before starting training, there are a few additional steps. Since I am going to train a YOLO model, I will create a YAML file that contains information about the dataset.

Okay, now we can start training on our sliced dataset. I will use yolo11n.pt as a pretrained model, but you are free to use other models.

from ultralytics import YOLO

# Initialize YOLO11 model, nano model

model = YOLO('yolo11n.pt')

# Train the model

results = model.train(

data="dataset.yaml", # path to the yaml file

epochs=50,

imgsz=640,

batch=16,

name='yolo11_visdrone_sliced', # output folder name

project='runs/train',

device='cuda',

patience=10,

save=True,

plots=True,

verbose=True

)

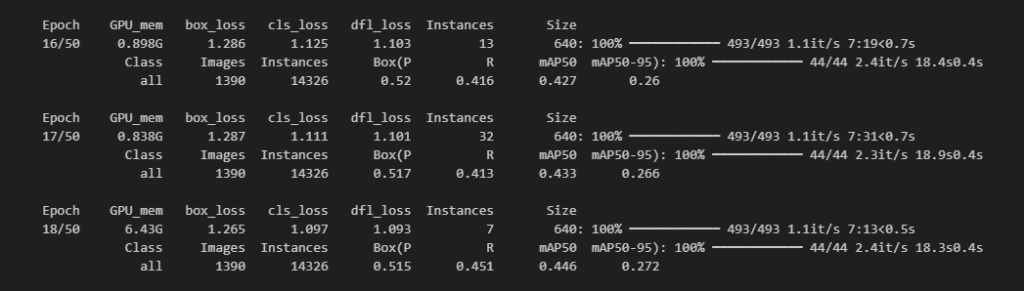

Training is started. Even tough I only used 2000×1500 resolution images from VisDrone19-Detection dataset, one epoch took 8 minute approximately. There were 772 images in 2000×1500 resolution, and after slicing, that number is 9300. After 8 hours, training is finished.

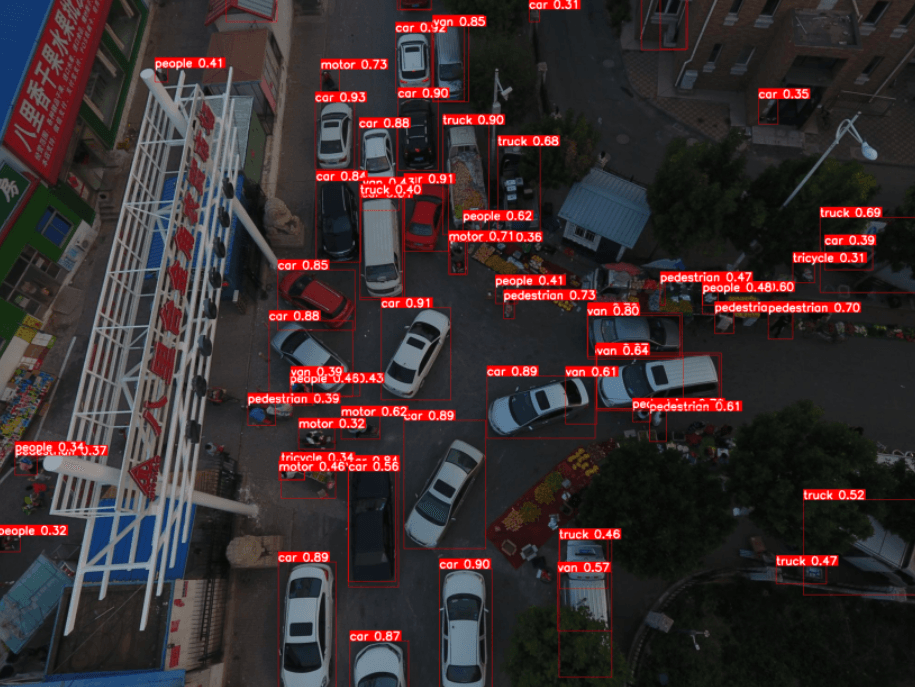

Now, it is time to test the new model. Again, I will use the get_sliced_prediction function with the newly trained model.

best_model_path="runs/train/yolo11_visdrone_sliced/weights/best.pt"

test_image="../test_image.jpg"

# Initialize SAHI detection model with fine-tuned model

detection_model = AutoDetectionModel.from_pretrained(

model_type='ultralytics',

model_path=best_model_path,

confidence_threshold=0.3,

device="cuda", # if you dont have GPU-supported env, "cpu"

)

# Perform sliced prediction

result = get_sliced_prediction(

test_image,

detection_model,

slice_height=640,

slice_width=640,

overlap_height_ratio=0.2,

overlap_width_ratio=0.2

)

# save the result

result.export_visuals(export_dir="runs/predictions/")

Okay, that’s it from me. I hope you liked it, and if you have any questions, feel free to ask me (siromermer@gmail.com)